|

Contrary to the belief of many, influenza is not just an especially bad version of a bad cold. It is a highly contagious respiratory disease that strikes suddenly, causing fever, chills, cough, sore throat, runny or stuffy nose, muscle or body aches, headaches, and fatigue. Symptoms can persist for weeks and in some instances lead to hospitalization or death. Children, pregnant women, seniors, young children, and people with certain health conditions are at elevated risk for serious complications. The best prevention is annual flu vaccination. Flu shots are recommended for everyone over age 6 months, except for individuals with allergies to chicken eggs or to certain medications and preservatives, individuals who have experienced a severe reaction to flu vaccination in the past, and individuals who have contracted Guillain-Barré Syndrome within six weeks after a previous flu vaccination. People with a moderate-to-severe illness with a fever should wait until they have recovered. Seniors may not respond adequately to standard dose flu shots and can be given a higher-dose vaccine. On October 7, 2016, The New York Times published an article entitled “Let’s Talk a Millenial Into Getting a Flu Shot.” Why were Millenials singled out? Because a survey of urgent care centers found that more than half of them did not intend to be vaccinated. A 27-year-old Times staff member named Jonah volunteered to offer his own excuses and judge how convincing he found the scientific rebuttals (though as Neil deGrasse Tyson observed, “The great thing about science is that it’s true whether or not you believe it”):

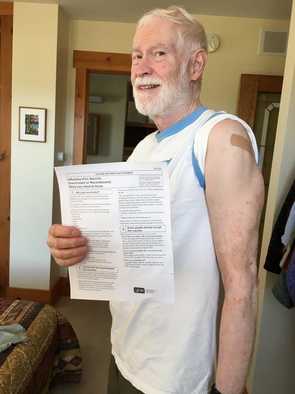

As usual, the very success of flu vaccination, combined with the limited life experience of most young people, has shielded them from the realities of a truly unpleasant flu season. But the particular reluctance of Millenials may also have to do with their being the children of a cohort of parents widely influenced by falsified data linking MMR (measles-mumps-rubella) vaccination to a puzzling apparent increase in autism. This fear, which children born after the mid 1990s imbibed with their mothers' milk, subsequently generalized to all vaccinations and led to widespread distrust of a medical establishment that has overall served us well. In fact, as discussed at greater length in my previous post, there is no scientific evidence whatsoever to support a relationship between vaccination and autism, and the original journal article has long since been debunked and retracted. Nonetheless, just a few weeks ago an article was published in JAMA Pediatrics showing no association of either influenza or influenza vaccination during pregnancy with autism in the child - a line of research that, however reassuring, would probably not be draining resources disproportionately were it not for this cruel hoax. Sadly, these younger Millenials may consequently fail to protect themselves against a number of painful and potentially deadly adult diseases, including pneumonia, shingles, and others to be discussed in a future post. They may also be passing along their own dangerous antiscientific prejudices to the next generation. * * * * * * * * * * * * * * *  As you can guess from my husband’s “flu shot couture,” he and I got our immunizations a couple of months ago, which is when I'd originally planned to post this entry (sigh). Drugstore chains, having discovered that offering immunizations is a good way to lure back-to-school shoppers into their stores, now begin advertising as early as August that supplies of the new vaccine are available. Getting inoculated at the beginning of August is not necessary and may even be a bit too early. Since the vaccine’s protective effects start to taper off within a few months, mid September through early October may actually be the optimal time to be vaccinated and start your "immunity clock" ticking. If you missed that window, however, it's still not too late to get a 2016-2017 flu shot. Immunity following vaccination builds up in about two weeks, and the peak flu season usually runs through January and February. You can be vaccinated at Walgreen’s, Rite Aid, and other major drugstore chains; or you can check online for other options within your community. It’s better to have it right now, even in late December, than to skip it altogether.

0 Comments

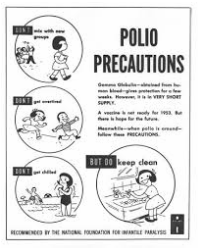

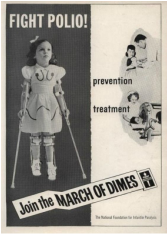

Poliomyelitis, also known as infantile paralysis or polio, is a highly infectious disease that strikes mainly (but not exclusively) children. It starts innocently enough, like many childhood illnesses, with fever, headache, vomiting, and stiffness or pain. Occasionally, however, the disease invades the central nervous system and causes paralysis, sometimes transient but more often permanent. Thankfully this disease can now be prevented. Consequently, most people in this country have never seen an active case of polio, though many polio survivors born before the mid-1950s remain and indeed have been in the forefront of disability rights advocacy. Isolated cases and localized outbreaks of polio have been described for many centuries, but not until the 20th century did major epidemics begin to occur. The first of these in the US was declared in 1916. Theories, mostly wrong, arose to explain its spread. Dogs and cats were thought to be carriers and tens of thousands of household pets were abandoned or euthanized. Other suspects proposed by the semi-informed or uninformed included mosquitoes, sewers, groundwater, pesticides, flies, bedbugs, subways, and mercury, to name just a few of the more plausible suggestions. As so often occurs, immigrants - at that time mostly Italians - were accused of bringing in and spreading the disease, despite the total lack of evidence of polio in Italy at the time. Children with polio, and even sick children who might or might not have polio, were quarantined in hospitals - many of which barred all visitors, even their parents. The worst outbreak of polio ever seen in America took place in the summer of 1952. Nearly 58,000 cases were reported; of these, 3,145 died and over 20,000 were left with some degree of paralysis. I was nine years old at the time. By then the disease was pretty clearly endemic and people were no longer blaming immigrants, or at least not that I recall. But I remember panicky parents keeping their children away from swimming pools and movie theaters. I remember a neighboring girl - little Linda, barely more than a toddler - wearing metal braces on both legs and walking laboriously with crutches. I remember terrifying photos in the newspaper of children imprisoned in iron lungs for weeks at a time. I also remember the collective sigh of relief breathed by all of America when a successful killed-virus vaccine was approved for use in 1955. Dr. Jonas Salk, the developer, became a national hero overnight. Parents couldn’t get in line fast enough to have their children vaccinated. The advent of the Salk vaccine, and later of an alternative vaccine developed by Dr. Albert Sabin using attenuated live virus, led to the virtual eradication of polio in this country. (There was no cure for polio then; there still isn't. It can only be prevented by vaccination. The last outbreak in the US occurred in 1979, in unvaccinated Amish communities in three states.)

This is the way it’s supposed to be. We should all celebrate wholeheartedly the miracles of modern medicine that have protected us from the ravages of polio and from the whole panoply of diseases that used to kill off more children than survived. We should rejoice that the days in which women carried, gave birth to, and then lost infant after infant, and families had to welcome a dozen or so children just to ensure that a few would live to adulthood, are a thing of the past. Instead, a cruel hoax has resulted in doubt and distrust. Alarmed by an apparent and as yet unexplained increase in autism, anxious parents looked to modern medicine to provide an answer and hoped they’d found one in a paper by Andrew Wakefield, published in 1998 in a prestigious medical journal, The Lancet. Ironically, the article used falsified data to undermine the very medical advances that have done away with three dangerous childhood diseases - measles, mumps, and rubella - by vilifying the MMR vaccine. Like polio, these diseases are usually mild (I suffered through them and so did almost everyone I knew), but a minority of cases result in devastating complications. This paper has since been totally discredited and withdrawn by the editors of The Lancet, but once out of the bottle the genie refuses to go back in. It has turned into a bonanza for Wakefield, who despite having been stripped of his medical license for unethical behavior has achieved celebrity status among parents desperate for some explanation for autism (which coincidentally emerges around the same time as children receive their MMR vaccinations) and continues to profiteer from their misfortune. It has also created a generation of “antivax” parents who distrust not just the MMR vaccine but all vaccines, and indeed, the medical profession and science in general - parents so frightened and suspicious that they are willing to break the social contract whereby we all agree to take a vanishingly small risk to protect the larger community from a much greater danger. A recent NY Times article by Donald G. McNeil Jr. explored the remarkable similarities between the polio outbreak of 1916 and the current outback of another mystifying and terrifying disease, Zika, exactly 100 years later. As McNeil points out, many of the features that characterize our response to Zika, including ugly ethnic prejudice and ineffective measures based on a proliferation of unsubstantiated theories, echo those that emerged in the early days of the polio epidemic. Polio was ultimately banished not by fear-induced lashing out at newcomers and grasping at straws but by the development of an effective vaccine. “All of which may happen with Zika,” says McNeil, “although it can’t happen too soon.” No one could wish for Zika to last a moment longer than necessary. But wouldn't it be remarkable if today’s “antivax” parents, most of whom have themselves been protected by polio vaccination and never experienced anything comparable to what I witnessed as a child, were somehow able to learn from Zika the lessons that the very successes of modern medicine, like the eradication of polio in the US, have enabled many to forget? Smallpox vaccination, the first successful vaccine in Western medicine’s toolkit, was developed by Dr. Edward Jenner in 1796 after he observed that milkmaids who had caught cowpox, or Variolae vaccinae, a much milder disease, never developed smallpox. Indeed, the term “vaccine” comes from the Latin word for cow. The term vaccine originally referred specifically to smallpox vaccination with the cowpox virus; in 1881 Louis Pasteur, to honor Jenner’s achievement, proposed applying the term generically to all protective inoculations. But before there was Edward Jenner, there was Lady Mary Wortley Montagu. Lady Mary, whose husband served as British ambassador to Constantinople, had occasion while living in Turkey to observe a practice called “variolation.” “Variolation” in some form - involving exposure to a weakened form of smallpox - had long been practiced in parts of China, India, Africa, and the Ottoman Empire, and it was clear to Lady Mary that the procedure was both safe and effective. Having lost a brother to smallpox, which claimed the lives of around 35% of its victims, and having herself been left severely and permanently scarred by the disease, she was prepared to use any means at her disposal to spare her children a similar fate. In 1718 she had her 5-year-old son variolated in Turkey; in 1721, after her return to England, she had her daughter variolated as well during a smallpox outbreak, thus introducing the practice to England. I studied Lady Mary’s writings back in the 1970s, in the course of my research on autobiographies of 17th and 18th century English women. Lady Mary wasn’t a physician or a scientist, nor did she see herself as a medical pioneer. She was simply a devoted mother who used her keen powers of observation and the resources at her disposal to protect her children as best she could. She did exactly what most mothers, guided by the best information available to them, would do in her situation. As a noblewoman, she was also aware that her actions could influence British “fashion” and assumed her example would inspire other mothers to do the same in order to protect their own children - as indeed to some extent it did. (She was far less confident that the medical profession would adopt a practice that would reduce their revenue!) Smallpox vaccination is one of the great successes of modern medicine. In fact, the last endemic case in the world occurred in Somalia in 1977, and no one in the US has been vaccinated since the early 1970s. This is one of the few instances in which not vaccinating is truly safer than vaccinating, since even the rarest or mildest of adverse reactions is unacceptable when the likelihood of anyone’s coming down with smallpox is essentially zero. So far smallpox is the only infectious disease ever to be eradicated. Other candidates such as measles and polio still elude eradication; unfortunately efforts to do so are hampered by campaigns inspired by politics, religion, or anti-science to undermine confidence in vaccination.  Selfie of my smallpox vaccination scar, almost faded but still visible on my upper arm. You will seldom see this telltale mark on people in their mid forties and under. Once my generation passes they will probably never be seen again. One of the joys of our post-professional lives is our little cottage in the pinky of Northern Lower Michigan, at the fringe of the Sleeping Bear Dunes National Lakeshore. Of all the homes we’ve owned during our fifty years of marriage, it’s the only one we ever built; our daughter designed it for us, so it is uncompromisingly tailored to our needs and tastes. My husband enjoys swimming, kayaking, and cycling in the summer and Nordic skiing in the winter. I like hiking and also love the fresh produce I can buy at local Farmer’s Markets throughout the summer, as well as the many destination restaurants within just a few miles of us. Until the Sleeping Bear Dunes area was voted "the most beautiful place in America” by the viewers of Good Morning America in 2011, the attractions of coastal Michigan were a well kept secret; now the tourists have discovered them, and so long as they treat the land and water with the same respect that we do, we’re happy to share. As a member of my early-morning walking group exclaims at least once a week, “Girls, we live in Paradise!” Within the last few years, however, a serpent has invaded Paradise. Our beloved Sleeping Bear Dunes has been officially identified as an "emerging Lyme Disease area," meaning that the conditions for an epidemic - environmental factors, the vector (the tiny black-legged deer tick), the pathogen (the Borrelia burgdorferi bacterium), and the host (deer and other wildlife) - are actually emerging here even as I write; that is, just a few years ago infected ticks were typically not found here and today they are. Major bummer. The National Park Service has led the way in encouraging tick awareness and is hoping that tucking your pant legs into your socks will become a new fashion statement. When our daughter enrolled our granddaughter in a sleepover camp just half an hour south of us, she asked about tick precautions and was told they were “too far North” for Lyme Disease to be a concern. Not so! It is important to understand that the disease does not simply fan out from areas where it is already established. Since the pathogen is carried by wildlife including birds, it can emerge wherever environmental conditions are right and deer ticks are present. Consequently, the disease is currently spreading into widely dispersed areas of the country. Lyme Disease is no joke. Around 30,000 cases per year are reported to the CDC (almost certainly a massive underestimate, probably by a factor of ten) in individuals of all ages in the U.S. alone. Initial symptoms can include rash, headache, fever, fatigue, and joint pain. If antibiotic treatment is delayed or withheld, chronic arthritis, serious neurological difficulties, cardiac problems (sufficient in some cases to require a heart transplant), or even death can ensue. Unfortunately, detection, diagnosis, and treatment of Lyme Disease are fraught with uncertainty. For starters, the tick bite does not necessarily cause pain or itching so you may not even realize you’ve been bitten. If you do find a tick on your body, you can only guess at whether it's been feasting long enough to transfer the pathogen - if indeed "your" tick carries the pathogen at all. If you develop the telltale bullseye rash (caused by the rash fading near the bite site over time as it spreads progressively outward), then you almost certainly have the disease and antibiotic treatment should be initiated. In many instances, however, the rash is absent or presents atypically, and at that point, depending on your symptom profile and whether you live in an area where the disease is endemic, it becomes a judgment call on your part as to whether to seek treatment, and then on your doctor's part as to how best to proceed.There is no direct lab test for the pathogen in your blood; it can only be detected by the presence of antibodies, which develop later and possibly not at all if you’ve been treated with antibiotics. Although antibiotic treatment is usually successful, many doctors tend to discount the risk and over-worry about antibiotic use. The overall clinical picture is one of under-treatment. Last night while we were watching TV, my husband showed me a scab he’d just noticed on his upper arm. Then, when I returned this morning from my walking group outing, he grimly displayed a plastic pill bottle containing a tick he’d found on his underwear. Was the scab he’d scratched the night before from a tick bite? Had the tick he found this morning been attached long enough to cause the disease? We had no way of knowing, but he took his specimen (which will probably live indefinitely, since deer ticks can go for at least a year without food or water) over to our local physician’s office. Because she apparently prefers to err on the side of caution - and perhaps also because she’s been lobbied by the Park rangers - she recommended a course of Doxycycline. At this point in my narrative, you may be wondering why we have no vaccine against Lyme Disease. After all, surely preventive measures would be preferable to the haphazard and nonstandardized approaches to diagnosis and treatment described above, and would have spared my husband the course of antibiotic treatment he now faces.

When I first learned that dogs in high-risk areas are already commonly vaccinated against Lyme Disease, I heaved a sigh of relief: A vaccine for humans couldn't be far behind, right? Wrong! It turns out there's already a vaccine for humans, called LYMERix, licensed in 1998, that has proved safe and effective in Phase III clinical trials and has FDA approval - and the manufacturer withdrew it from the market in 2002! Why? In short, "low vaccine uptake, public concern about adverse effects, and class action lawsuits." Self-styled “Lyme advocates” even claimed it caused arthritis, a classic symptom of the disease itself. There may well have been other factors involved in the withdrawal of the product (the complexity of the dosing schedule, need for further testing in a wider range of volunteers, etc.), but these are exactly the kind of challenges Big Pharma routinely grapples with and solves. Dr. Stanley Plotkin, Professor Emeritus at the University of Pennsylvania, who nearly lost a son to complications of Lyme disease, describes the lack of an effective vaccine when it is clearly possible to make one as “a public health failure that is shameful for the medical and public health community.” Although newer and perhaps even more effective candidate vaccines are currently under development, obtaining approval may be an uphill struggle given the inauspicious history of LYMErix. This is an issue that goes far beyond LYMERix. We think of antivaccinator activism as discouraging use of vaccines intended to prevent communicable diseases of childhood, but it also dampens enthusiasm for research and development on other potentially-preventable human scourges that may affect people of all ages, adults as well as children. There's plenty of suffering in this world that we can't prevent; please let's not landmine efforts to develop scientifically sound and effective preventive measures like those that have freed the world of smallpox and dramatically reduced the incidence of polio. Anyone who has ever had occasion to walk through an old cemetery has known the heartrending experience of pausing in front of the grave of an infant or small child. Often the child’s grave is near those of the parents. Often there is more than one such grave near the parents’ graves. A couple of weeks ago I had a very personal version of this experience when I attended a memorial service for a cousin whose ashes were being laid to rest in the family plot in central Massachusetts. Our grandparents, John and Daisy Dahart, were the patriarch and matriarch of the family. Their graves were surrounded by the graves of several of their children. and now by those of some of their grandchildren as well, including most recently my cousin's. One old grave bore the name of my Aunt Ruth Amelia Dahart Hall, John and Daisy’s oldest child, who died tragically in a fire at the age of 27, long before I was born. But mysteriously, there was another name on the stone as well, Laverne Dahart, and the haunting words “10 months.” Who exactly was Laverne Dahart? Not Ruth’s child, of that I was certain. Guessing she one of the two Dahart children who had died in early childhood, I followed up when I got home and through the miracle of the internet confirmed that Laverne Evelyn had indeed been born to John and Daisy Dahart on March 10, 1915, almost exactly two years after the birth of my mother in 1913. Although according to the gravestone Laverne had died at ten months, in fact her death certificate indicates that she was even younger, just six months and seventeen days at the time of her death on September 27, 1915. The doctor who signed it indicated that he had been attending her since September 20, only a week before she died; the course of her final illness had been breathtakingly rapid.

The cause of death was listed as “ileo colitis,” but based on what is currently known it was almost certainly a rotavirus infection, the most common cause of intractable diarrhea and dehydration in children under five. Although rotavirus infections are usually quite treatable, especially in developed countries where rehydration therapy is available and relatively high standards of cleanliness can be maintained, until recently some 2.7 million cases per year occurred in the US, of which 60,000 were serious enough to require hospitalization. Worldwide, over 450,000 children under the age of five still die from rotavirus annually. Daisy and John had twelve children who lived to adulthood, but anyone who thinks that might have assuaged their grief at the death of their tiny daughter has never been a parent. Likewise, Laverne’s older siblings must have been badly shaken by the loss of a child who just a few days earlier had been sitting among them at the dinner table, propped up in her high chair. Did they help with her care? Did she amuse them with her antics? Was she crawling and getting into mischief? One of my sisters was born two years after I was and I actually remember a few of my first impressions - how little she was, and especially her tiny feet. Did my mother remember anything about Laverne? I’ll probably never know how Laverne and Ruth came to share a common grave despite having died ten years apart. I can only surmise that an informal marker on Laverne’s grave was replaced with a more permanent stone when it was decided that Ruth would be buried there as well. What I do know is that since the introduction of rotavirus vaccination in the US ten years ago, the incidence and severity of rotavirus infections has declined dramatically. Although I never met my grandmother Daisy, I feel certain she would have given anything in her possession or done anything in her power to save her infant daughter. The opportunity to do so is now available to all parents except for the few in whose children this demonstrably safe vaccine is contraindicated. I am launching this blog as a way of doing my bit to help counter a cruel hoax that has opened a veritable Pandora’s box by undermining confidence in vaccination, in the medical profession, and in governmental agencies dedicated to protecting and promoting the public health. I am launching it in the hope that no parents will ever know the pain of having unwittingly exposed their children to rotavirus, to which my grandmother’s infant daughter succumbed; or to Haemophilus meningitis, which threatened my own daughter’s life in 1974; or to any of the other terrifying diseases that can now be prevented by vaccination. |

Categories

All

Archives

February 2018

|

RSS Feed

RSS Feed